We are back to our favourite topic – coin-flipping. Anna and Ben are playing a game of flipping coins. They aim for a pattern and whoever gets it there wins. Anna chooses head-tail (HT) and Ben for head-head (HH). Who do you think will win?

You may assume since the probability of getting HT or HH in two tosses is the same, i.e., 1 in 4, the chances of winning should be identical. But the game is not about two tosses. The game is about several tosses and then counting who got the most. Let’s do this game first before getting into any theories. The following R code executes the game 10000 times, each with 5000 flips at a time, and calculates the number of times they get their respective patterns.

library(stringr)

redo <- 10000

flip <- 5000

streak <- replicate(redo, {

toss <- sample(c("H", "T"), flip, replace = TRUE, prob = c(1/2,1/2))

toss1 <- paste(toss,collapse=" ")

count <- str_count(toss1, c("H H"))

})

mean(streak)

streak <- replicate(redo, {

toss <- sample(c("H", "T"), flip, replace = TRUE, prob = c(1/2,1/2))

toss1 <- paste(toss,collapse=" ")

count <- str_count(toss1, c("H T"))

})

mean(streak)

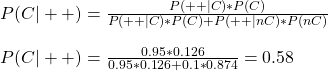

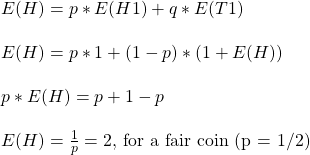

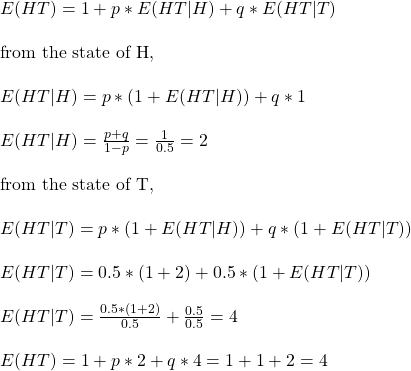

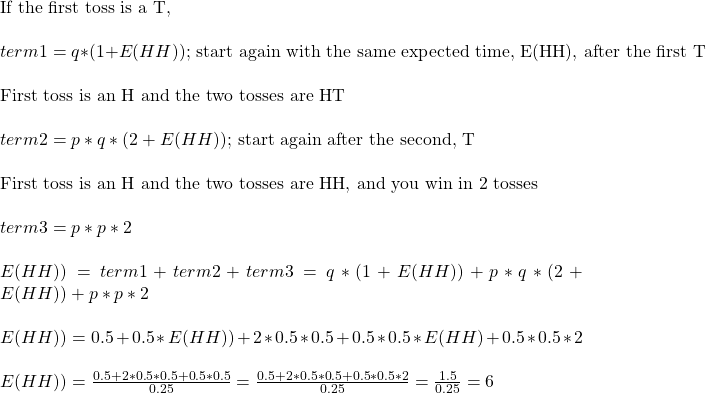

The answer I got was 833.17 for Ben (HH) and 1249.76 for Anna (HT). Divide the number of flips with these numbers, and you get the average waiting times for the pattern. They are 5000/833.17 = 6 and 5000/1249.76 = 4. So, on average, Anna needs to wait for four flips, and Ben needs six before getting the pattern.

Pattern of three

Let us extend this for 3-coin games. Using the following code, we find the average waiting time for the three patterns – HHT, HTH, and HHH.

```{r}

library(stringr)

redo <- 10000

flip <- 5000

streak <- replicate(redo, {

toss <- sample(c("H", "T"), flip, replace = TRUE, prob = c(1/2,1/2))

toss1 <- paste(toss,collapse=" ")

count <- str_count(toss1, c("H H T"))

})

flip/mean(streak)

streak <- replicate(redo, {

toss <- sample(c("H", "T"), flip, replace = TRUE, prob = c(1/2,1/2))

toss1 <- paste(toss,collapse=" ")

count <- str_count(toss1, c("H T H"))

})

flip/mean(streak)

streak <- replicate(redo, {

toss <- sample(c("H", "T"), flip, replace = TRUE, prob = c(1/2,1/2))

toss1 <- paste(toss,collapse=" ")

count <- str_count(toss1, c("H H H"))

})

flip/mean(streak)

The waiting times are 8, 10 and 14 flips, respectively, for HHT, HTH and HHH.

Chances not identical

We will look at the theoretical treatment in another post. But first, let us try and understand it qualitatively. While the probability of getting both those two-coin sequences (or three in the second game) may be the same, the game they played takes different pathways depending on each outcome.

Look at the game from Anna’s point of view (she needs HT to win): Imagine she starts with H. The next can be an H or a T. If it is a T, she wins. But if she gets an H, she doesn’t win, but a win is just a toss away, as there is a 50% chance for her to get a T in the next flip. In other words, her failure gives her a headstart for the next.

On the other hand, Ben also starts with an H. Another head, he wins, but a tail, he needs to start all over again. He must get an H and aim for another H. A 25% chance of that happening after a failure.

![Rendered by QuickLaTeX.com \\ P(GG|F) = \frac{P(F|GG)*P(GG)}{P(F|GG)*P(GG) + P(F|GB)*P(GB) + P(F|BG)*P(BG) + P(F|BB)*P(BB)} \\\\ = \frac{[p(1-p)+(1-p)p+p^2]*\frac{1}{4}}{[p(1-p)+(1-p)p+p^2]*\frac{1}{4} + p*\frac{1}{4} + p*\frac{1}{4} + 0*\frac{1}{4}} = \frac{(2p-p^2)*\frac{1}{4}}{(2p-p^2)*\frac{1}{4} + p*\frac{1}{4}} = \frac{2-p}{4-p}](https://thoughtfulexaminations.com/wp-content/ql-cache/quicklatex.com-3523721d77ba64c93a37e31f950d8746_l3.png)

![Rendered by QuickLaTeX.com \\ P(D|2nd +) = \frac{P(2nd +|D)*P(D|1st+)}{P(2nd +|D)*P(D|1st+) + P(2nd+|nD)*(1-P(D|1st+))} \\ \\ \text{where, } \\ \\ P(D|1st+) = \frac{P(+|D)*P(D)}{P(+|D)*P(D) + P(+|nD)*(1-P(D))} \\ \\ \text{since these tests are independent}, P(2nd +|D) = P(+|D) \text{. Substituting, } \\ \\ P(D|2nd +) = \frac{P(+|D)*P(D|1st+)}{P(+|D)*P(D|1st+) + P(+|nD)*(1-P(D|1st+))} \\ \\ = \frac{P(+|D)* [ \frac{P(+|D)*P(D)}{P(+|D)*P(D) + P(+|nD)*(1-P(D))} ] }{P(+|D)* [ \frac{P(+|D)*P(D)}{P(+|D)*P(D) + P(+|nD)*(1-P(D))} ] ) + P(+|nD)*(1- [ \frac{P(+|D)*P(D)}{P(+|D)*P(D) + P(+|nD)*(1-P(D))} ] )} \\ \\ \text{expanding and cancelling similar terms,} \\ \\ P(D|2nd +) = \frac{P(+|D)*P(+|D)*P(D)} {P(+|D)*P(+|D)*P(D) + P(+|nD)*(1-P(D))} = P(D|++)](https://thoughtfulexaminations.com/wp-content/ql-cache/quicklatex.com-a9ed4c7fc65cf1f46e1a6a9b58fb4d10_l3.png)