We saw the first 10 of the 24 Cognitive Biases from Charlie Munger’s 1995 speech – Human Misjudgement. In this post, we will go through the rest.

11. Bias from deprival super-reaction syndrome: This is akin to the loss aversion bias, illustrating how we react when possessions, even trivial ones, are taken away. Consider, for instance, the intensity of employee-management negotiations where every inch is fiercely contested.

12. Bias from envy/jealousy:

13. Bias from chemical dependency, 14. Bias from gambling dependency: These biases don’t need a lot of explanation. They trigger attentional bias, leading individuals to allocate disproportionally more attention to addiction-relevant cues than to neutral stimuli. Businesses, whether pubs or casinos, are well-versed in exploiting these vulnerabilities. Being aware of these tactics can help you navigate such situations more effectively.

15. Bias from liking distortion: It’s about liking oneself, one’s ideas, and community. And making stupid ideas only because they came from someone you liked.

16. Bias from disliking distortion: Opposite of liking distortion. In this case, you dismiss ideas from people who you don’t like.

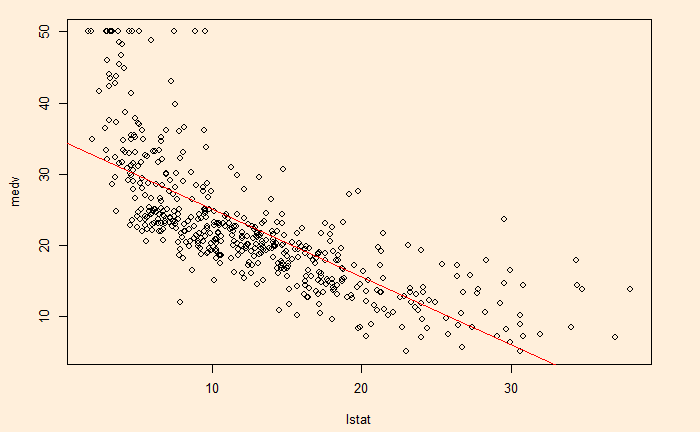

17. Bias from the non-mathematical nature of the human brain: Human brains in their natural state (i.e., untrained state) are notoriously inefficient when dealing with probabilities. Within this entity, Munger conveniently folds various fallacies of the human mind – crude heuristics, availability, base-rate neglect, hindsight – into one.

18. Bias from fear of scarcity: The fear of scarcity can bring out pure dumbness in otherwise perfectly normal people. A familiar example is the toilet paper rush during the early days of the Covid pandemic.

19. Bias from sympathy: It’s about leaders keeping employees with dubious personal qualities. Often, this happens out of pity for the person or her family. Munger says that while paying them the proper severance is essential, keeping such people in jobs can make the whole organisation poor.

20. Bias from over-influence and extra evidence:

21. Bias caused by confusion due to information not being properly processed by the mind: Munger stresses the need to understand the reasons (answer to the question – why?) for the information to be properly registered in the brain. Like for individuals themselves, it is also important for people to explain the reasoning clearly while communicating the key decisions and proposals to their stakeholders.

22. Stress-induced mental changes: What later happened to the Pavlovian dogs (conditioned for certain behaviours) after their cages were flooded was a good example of what stress can do. The canines forgot all the training and responses that they had acquired.

23. Common mental declines:

24. Say-something syndrome: It’s a habit of many individuals to do the talk irrespective of their expertise and capacities to impact the decision-making process. They remain just soundbites, and Munger cautions to watch out for those quiet selves that eventually add quality.